Oxen.ai Blog

Welcome to the Oxen.ai blog 🐂

The team at Oxen.ai is dedicated to helping AI practictioners go from research to production. To help enable this, we host a research paper club on Fridays called ArXiv Dives, where we go over state of the art research and how you can apply it to your own work.

Take a look at our Arxiv Dives, Practical ML Dives as well as a treasure trove of content on how to go from raw datasets to production ready AI/ML systems. We cover everything from prompt engineering, fine-tuning, computer vision, natural language understanding, generative ai, data engineering, to best practices when versioning your data. So, dive in and explore – we're excited to share our journey and learnings with you 🚀

LTX-2 is a video generation model, that not only can generation video frames, but audio as well. This model is fully open source, meaning the weights and the code are available for...

Imagine you are shooting a film and you realize that you have the actor wearing the wrong jacket in a scene. Do you bring the whole cast back in to re-shoot? Depending on the actor...

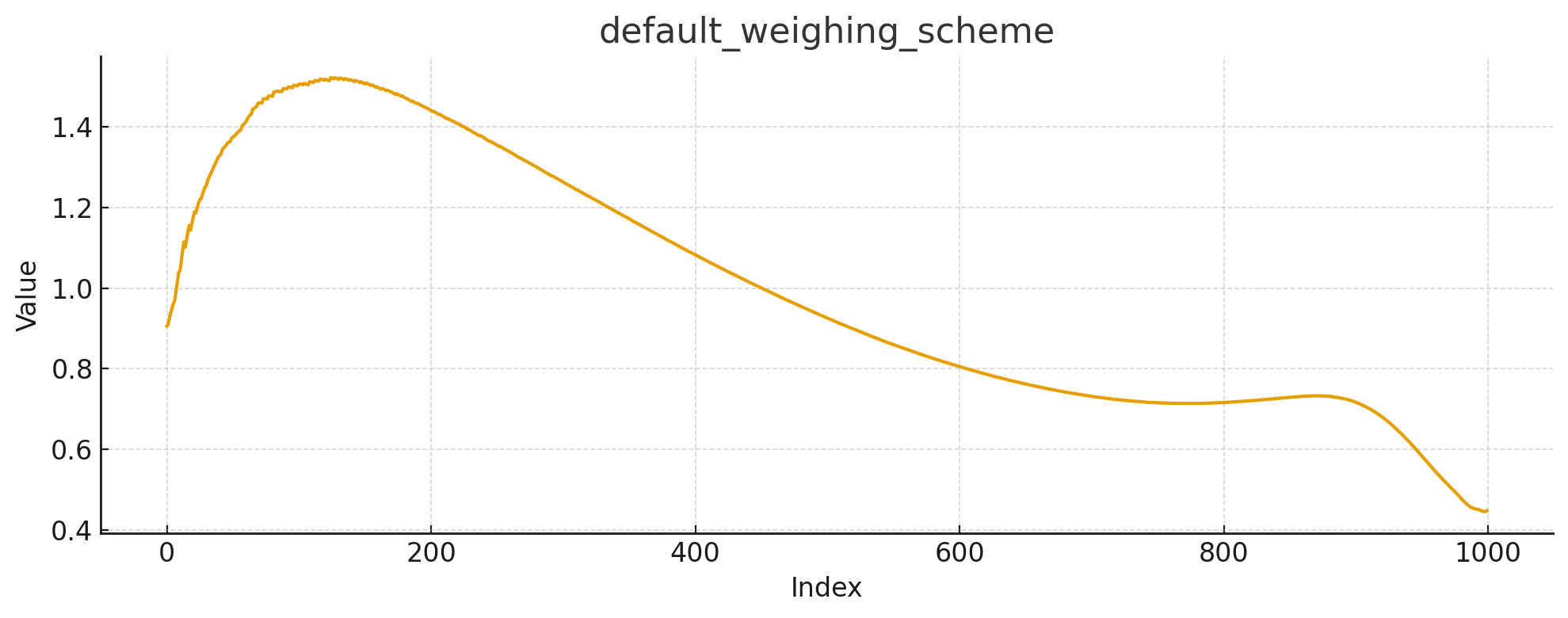

At Oxen.ai, we think a lot about what it takes to run high-quality inference at scale. It’s one thing to produce a handful of impressive results with a cutting-edge image editing m...

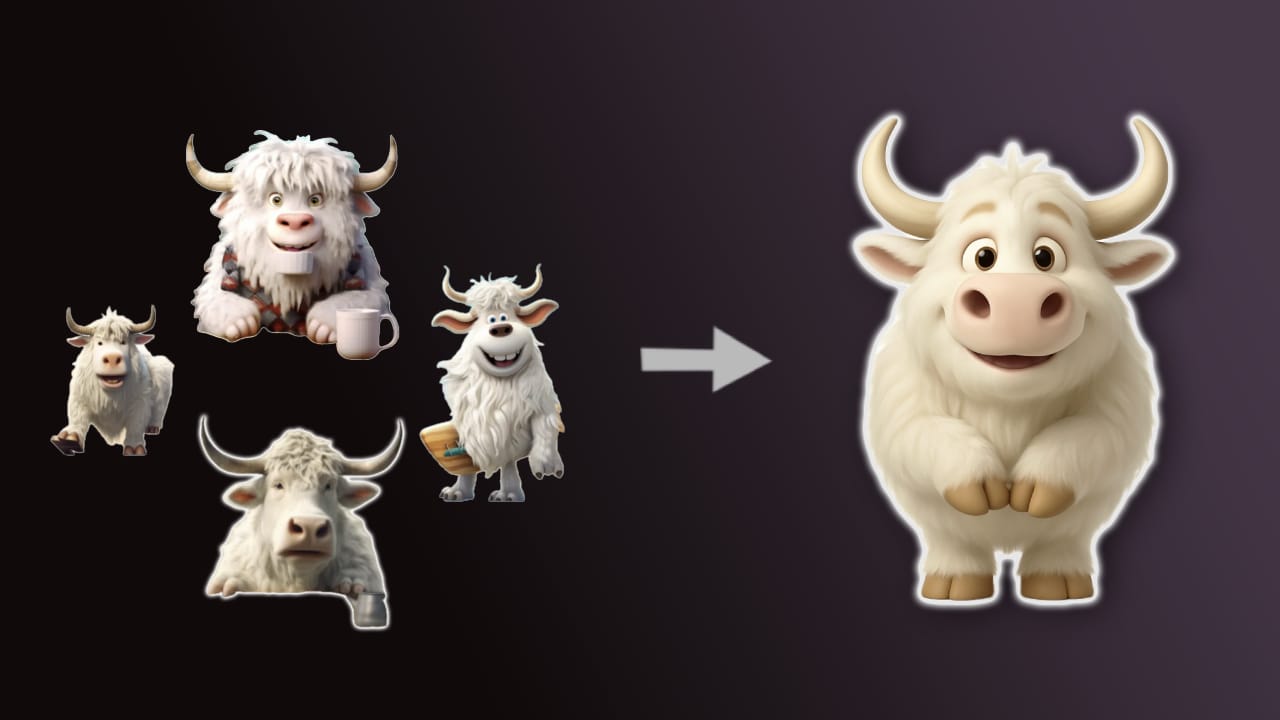

Fine-tuning Diffusion Models such as Stable Diffusion, FLUX.1-dev, or Qwen-Image can give you a lot of bang for your buck. Base models may not be trained on a certain concept or st...

Welcome back to Fine-Tuning Friday, where each week we try to put some models to the test and see if fine-tuning an open-source model can outperform whatever state of the art (SOTA...

OpenAI came out with GPT-OSS 120B and 20B in August 2025. The first “Open” LLMs from OpenAI since GPT-2, over six years ago. The idea of fine-tuning a frontier OpenAI model was exc...

Welcome to Fine-Tuning Fridays, where we share our learnings from fine-tuning open source models for real world tasks. We’ll walk you through what models work, what models don’t an...

FLUX.1-dev is one of the most popular open-weight models available today. Developed by Black Forest Labs, it has 12 billion parameters. The goal of this post is to provide a barebo...

Can we fine-tune a small diffusion transformer (DiT) to generate OpenAI-level images by distilling off of OpenAI images? The end goal is to have a small, fast, cheap model that we ...

Welcome to a new series from the Oxen.ai Herd called Fine-Tuning Fridays! Each week we will take an open source model and put it head to head against a closed source foundation mod...